Computational analysis of the Live Music Archive (CALMA) & the Grateful Dead project

Thomas Wilmering, Queen Mary University of London

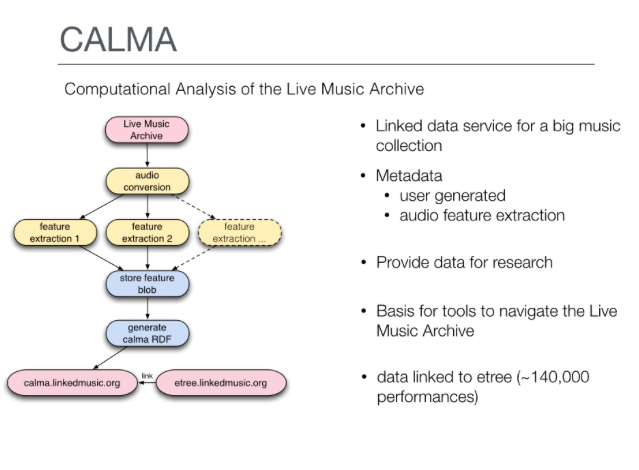

Description: CALMA

The main objectives of this demonstrator are to enable innovative applications related to live music in the areas of music informatics and music information retrieval, as well as to develop novel ways to navigate big data music collections for an improved user experience, and to support scholarship in popular musicology. It includes a Linked Data service exposing substantial data about live music recordings from the Internet Archive etree collection, including core and contextual metadata linked with existing popular Semantic Web resources, as well as the output of content-based feature analyses (tempo, key, etc.) of audio recordings. This linkage between contextual and feature-based metadata supports the verification of the (user-provided and potentially noisy, erroneous, or incomplete) contextual metadata based on cues from the audio content. It further supports informed analysis of the large number of repeat performances available in the collection (bands performing the same songs at multiple venues and over multiple years), enabling the exploration of the evolution of a band’s performances over contextual dimensions, such as the tempo of performances of a certain song over time. Further contextual explorations are also possible, e.g. of the relationship of audience reaction between tracks and music venues and locations. The RCalma analytical workflow tool exploits the Linked Data, querying these data sources from within the RStudio data science environment. This way, a query on performance metadata produces feature data accessible via standard R data frames, enabling easy plotting and analysis.

Description: Grateful Dead

Branching off the CALMA project, the Exploration Tool for Grateful Dead Live Performances demonstrator produces a Web application specifically developed for the exploration of Grateful Dead concerts. It aims to demonstrate how Semantic Audio and Linked Data technologies can produce an improved user experience for browsing and exploring music collections. It is motivated by the ongoing interest in detailed descriptions of Grateful Dead performances, evidenced by the large amount of information available on the Web detailing various aspects of those events. The application links the large number of concert recordings by the Grateful Dead available in the Internet Archive with audio analysis data and retrieves additional information and artefacts (e.g. band lineup, photos, scans of tickets and posters, reviews) from existing Web sources, to explore and visualise the collection.

Publications

Wilmering, T., Fazekas, G., Dixon, S., Page, K., Bechhofer, S. (2015), “Towards High Level Feature Extraction from Large Live Music Recording Archives”, International Conference on Machine Learning (ICML), Machine Learning for Music Discovery Workshop, 6-11 July, Lille, France

Wilmering, T., Fazekas, G., Dixon, S., Bechhofer, S., Page, K. (2015), “Automating Annotation of Media with Linked Data Workflows”, Third International Workshop on Linked Media (LiME 2015) co-located with the WWW’15 conference, 18-22 May, Florence, Italy.

Bechhofer, S., Dixon, S., Fazekas, G., Wilmering, T., Page, K. (2014), “Computational Analysis of the Live Music Archive”.