in August 2016, FAST interviewed Dr David Weigl, one of the FAST partners from the Oxford e-Research Centre, Oxford University about the FAST project.

in August 2016, FAST interviewed Dr David Weigl, one of the FAST partners from the Oxford e-Research Centre, Oxford University about the FAST project.

1. Could you please introduce yourself?

I’m originally from Vienna, Austria. I moved to Scotland when I was 18, where I studied at the University of Edinburgh’s School of Informatics, and played bass in Edinburgh’s finest (only!) progressive funk rock band. After a few years in industry, I decided to return to academia to combine my research interests in informatics and cognitive science with my passion for music, pursuing a PhD in Information Studies at McGill University. Montreal is an amazing place to conduct interdisciplinary music research; at the Centre for Interdisciplinary Research in Music Media and Technology (CIRMMT), I was able to collaborate directly with colleagues in the fields of musicology, music tech, cognitive psychology, library and information science, and computer science, while benefiting from even more diverse perspectives, from audio engineering to intellectual property law. For the past couple of years, I’ve had the pleasure of working at the University of Oxford’s e-Research Centre, an even more interdisciplinary place: here at the OeRC, researchers supporting Digital Humanities projects regularly exchange notes with projects down the hall in radio astronomy, bio-informatics, energy consumption, and climate prediction.

2. What is your role within the project?

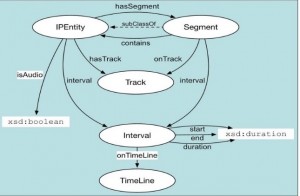

My role in the FAST project revolves around the application of semantic technologies and Linked Data to music information, in various forms: from features describing the audio signal extracted from live music recordings, via symbolic music notation, to bibliographic information, including catalogue data and digitized page scans from the British Library and other sources. The projects I work on support complex interlinking between these musical resources and tie in external contextual information, supporting rich, unified explorations of the data.

3. Which would you say are the most exciting areas of research in music currently?

Research into music information retrieval (MIR), and related areas of study, has been on-going since the turn of the millennium, when digital affordances, technologies such as the MP3 format, and widespread adoption of the Internet suddenly made digital music information ubiquitously available. The music industry took a long time to respond to the corresponding challenges and opportunities, but products such as Spotify, Pandora, and YouTube are now reaching a level of maturity enabling exciting new avenues of music discovery. Yet we are only listening to the tip of the iceberg, as it were. Many powerful techniques developed by the MIR community have not yet been exploited by industry; as we see more and more of these research outcomes integrated into user-facing systems, new ways of listening and interacting with music will become available.

4. What, in your opinion, makes this research project different to other research projects in the same discipline?

The cross-disciplinary, multi-institutional nature of the project, bringing together collaborative research into digital signal processing, data modelling, incorporation of semantic context, and ethnographic study across all stages of the music lifecycle, from production to consumption – combined with a long-term outlook, seeking to predict and inform the shape of the music industry over the coming decades – make FAST a particularly exciting project to be involved in!

5. What are the research questions you find most inspiring within your area of study?

I am especially interested in the notion of relevance when searching for music. This seems like such a simple notion when searching for a particular song – either the retrieved result is relevant to the searcher, or it isn’t – but the resilience of musical identity, retained through live performances, cover versions, remixes, sampling, and quotation, swiftly makes things more complicated. Deciding what is relevant becomes even more difficult when the listener is engaging in more abstract searches, e.g. for music to fulfil a certain purpose. For example, what makes a great song to exercise to? Presumably, some aspects – liveliness, energy, a solid beat – are universal, yet the “correct” answer will vary according to the listener’s expectations, listening history, and taste profile – be it Metallica, Daft Punk, or Beethoven.

6. What, in your opinion, is the value of the connections established between the project research and the industry?

I think such connections are valuable for numerous reasons. From the academic side, access to industry perspectives allows us to validate our assumptions about requirements and use cases; application of academic outputs within industry further acts as a sort of impact multiplier, ensuring our results are applicable to the real world. For industry, the value is in exposure and access to the latest state of research, fuelled by transparent, multi-institutional collaboration.

7. What can academic research in music bring to society?

On the one hand, advances in music information research have allowed us to conquer the flood of digital music available through modern technologies, enabling efficient and usable storage and retrieval. Each of us carries the Great Jukebox in the Sky in our pockets; listeners are able to access incredibly vast quantities of music wherever and whenever they like, and are better equipped than ever to inform themselves about the latest releases, and to discover hidden gems to provide a perfect fit for their taste and situation. Musicologists are able to subject entire catalogues to levels of scholarly analysis previously reserved for individual pieces, and are able to cross-reference between works by different composers, from different time periods or cultures, with such ease that the complexity of the interlinking fades into the background, becoming barely noticed.

On the other hand, such research has democratised music making and music distribution, providing artists with immediate access to their (potential) audience. Academia and industry must collaborate to ensure that monetisation is similarly democratised, to protect and strengthen music composition and performance as a viable career path for talented and creative individuals.

8. Please tell me why do you find valuable to do academic research related to music.

I’m very fortunate in that I get to combine my academic interests with my personal passion for music. I think that every researcher in the field feels this way; everybody I talk to at conferences plays an instrument, or sings, or produces music in their spare time. Music is inherently exciting – when I’m asked about my research at a social gathering, and I answer “information retrieval,” “linked data,” or “cognitive science”, I get a polite nod of the head; when I answer that I work with music and information, people’s eyes light up!

9. What are you working on at the moment?

I’m working on a number of different projects combining music with external contextual information using Linked Data and semantic technologies.

I have developed a Semantic Alignment and Linking Tool (SALT) that supports the linking of complementary datasets from different sources; we’ve applied it to an Early Music use case, combining radio programme data from the Early Music Show on BBC Radio 3 with library catalogue metadata and beautiful digitised images of musical score from the British Library, enabling the exploration of the unified data through a simple web interface.

I’m also working on the Computational Analysis of the Live Music Archive (CALMA), a project that enables the exploration of audio feature data extracted from a massive archive of live music recordings; for instance, we have access to hundreds of recorded renditions of the same song by the same band, performed over many years and at many different venues, allowing us to track the musical evolution of the song over time and according to performance context – does the song’s average tempo increase over the years? Do the band play more energetically in-front of a home crowd?

Finally, I’ve recently presented a demonstrator on Semantic Dynamic Notation – tying external context from the web of Linked Data into musical notation expressed using the Music Encoding Initiative (MEI) framework. This technology supports scholarly use cases, such as direct referencing from musicological discussions to specific elements of the musical notation, and vice versa; as well as consumer-oriented use cases, providing a means of collaborative annotation and manipulation of musical score in a live performance context.

10. Which is the area of your practice you enjoy the most?

The best parts are when multiple, disparate data sources are made to work together in synergistic ways, and unified views across the data are possible for the first time. This provides real “aha!” moments, where the hard data modelling work pays off, and complex insight becomes available.

For a second year in a row, C4DM hosted the Hands-on Sound Source Separation Workshop (HOSSS) on Saturday 11th of May, and it was a great success! It originated in 2018 out of the student frustration of not having enough space to brainstorm in conferences, when all relevant experts are in the same place.

For a second year in a row, C4DM hosted the Hands-on Sound Source Separation Workshop (HOSSS) on Saturday 11th of May, and it was a great success! It originated in 2018 out of the student frustration of not having enough space to brainstorm in conferences, when all relevant experts are in the same place. The idea is simple: get together and spend the day actively brainstorming/hacking some source separation related topics given by some of us (so no keynotes/talks/posters). Everyone is there to engage with each other and so everyone becomes much more approachable than in a traditional conference setting. This format therefore aims to inspire early-stage researchers in the field and promote the sense of scientific community by offering a common place for discussion.

The idea is simple: get together and spend the day actively brainstorming/hacking some source separation related topics given by some of us (so no keynotes/talks/posters). Everyone is there to engage with each other and so everyone becomes much more approachable than in a traditional conference setting. This format therefore aims to inspire early-stage researchers in the field and promote the sense of scientific community by offering a common place for discussion. The workshop started with an ice-breaking game followed by a quick presentation of all participants. Then, those who wanted to propose a topic for discussion did so briefly, explaining their idea on a whiteboard. As they did, we created a list of topics on the side. Once everyone who wanted had the chance to present their idea/problem/interest to the group, we proceeded to vote.

The workshop started with an ice-breaking game followed by a quick presentation of all participants. Then, those who wanted to propose a topic for discussion did so briefly, explaining their idea on a whiteboard. As they did, we created a list of topics on the side. Once everyone who wanted had the chance to present their idea/problem/interest to the group, we proceeded to vote. After lunch, some people changed groups. For example, after the two “Antoines” (Liutkus and Deleforge) had a productive Gaussian Processes morning with our PhD candidate Pablo Alvarado, another of our PhDs, Changhong Wang, discovered the world of median filtering source separation lead by the hand by one of the very best, A. Litukus.

After lunch, some people changed groups. For example, after the two “Antoines” (Liutkus and Deleforge) had a productive Gaussian Processes morning with our PhD candidate Pablo Alvarado, another of our PhDs, Changhong Wang, discovered the world of median filtering source separation lead by the hand by one of the very best, A. Litukus.

On Friday 3 November, Dr Brecht De Man (

On Friday 3 November, Dr Brecht De Man (